NVIDIA’s Nemoclaw announcement feels like a good excuse to finally write down a thesis I have been pondering for a while:

Every software company in the world, needs to have an @openclaw strategy - Jensen at GTC

The rise of code agents at runtime, claws as we call them now, is something I have been obsessing over for a few years now.

The second-order effect I am pondering is this: software is no longer just something we use directly. It increasingly becomes something traversed, interpreted, and operated through high-agency agents. Over time, those agents may become the primary consumers of much of our software and information.

Now that we are starting to see early signs that this might actually become real, I keep coming back to a bigger question: what does that do to the market structure of software? What should we build that these agents will actually need?

The Naive Take

A lot of the current discussion jumps straight to the extreme conclusion. If generic agents can assemble workflows in real time from files, tools, and user context, then maybe a large part of SaaS just stops making sense.

I feel like the traditional apps & saas make no sense anymore cuz I can literally map every single work process into file structures and markdown files, then generate dashboards, CRUDs, and CRONs on top, using everything via an agent that learns and evolves in real time. , @johnrushx

That may well be true for some categories. Some products are mostly workflow wrappers, and those wrappers may get compressed away once the agent becomes the main operator.

But I do not think that is the whole story.

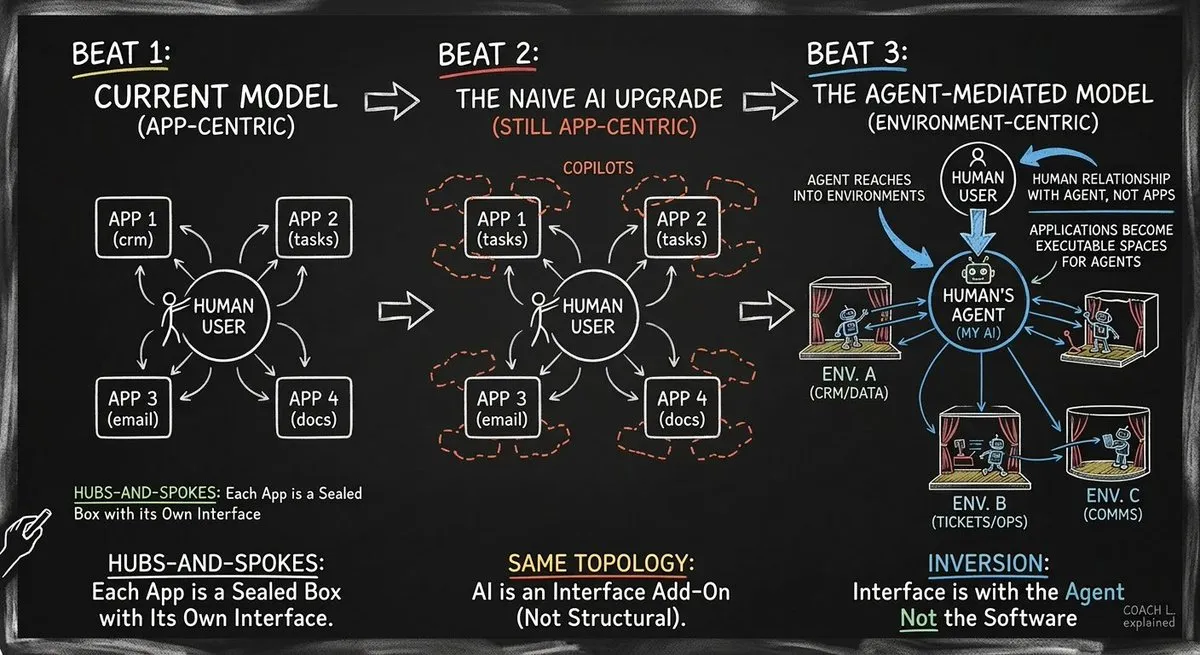

Beyond “An Agent for Every SaaS”

My view is that this shift runs deeper than “An Agent for every SaaS,” and it is also more nuanced than “everything just gets done by code agents.” If users and enterprises are increasingly mediated by their own agents, then the software market stops being organized mainly around applications and starts being organized more around what agents need, environments where they can operate effectively.

At that point, the important question is no longer just which app has the best interface, workflow or features. In some cases, the go-to-market may not even sell to a human at all. The question becomes: which system gives an agent the best conditions to get a class of work done safely, reliably, and efficiently? We might measure that completely differently than today.

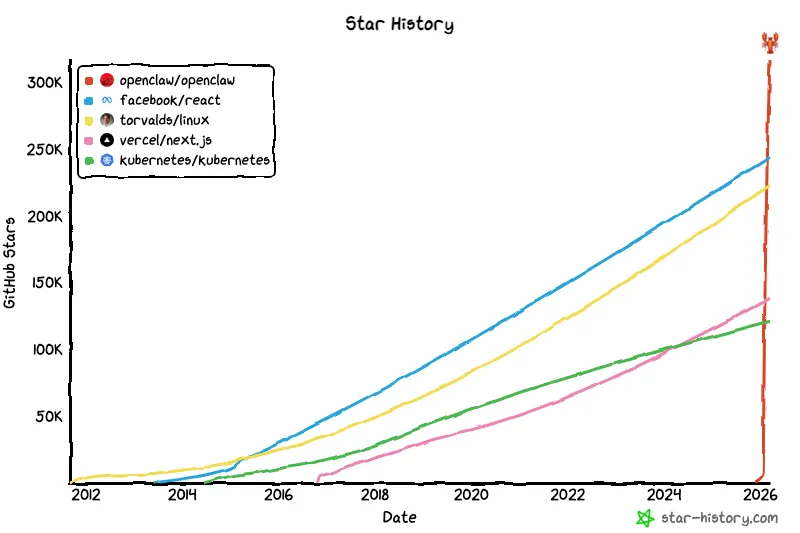

That is why releases like Nemoclaw catch my attention. Not because any one product settles the story, but because they make this form factor feel more real. More and more of the market seems to be converging on the idea that runtime agents are not just a gimmick (see adoption in China).

They are becoming a credible player for software consumption and execution.

After all, a standard does not have to be perfect. It just has to be adopted.

The Enterprise, Reimagined

Once you take that seriously, you start to picture enterprises differently. Not as organizations permanently bottlenecked by engineering time alone, but as organizations increasingly supported by highly capable agents that interface with the rest of the software market on their behalf.

At that point, the market starts to revolve around a different set of questions. For simplicity, I will use user and enterprise interchangeably here.

- Who decides to use the tool: the user, the agent, or some registry layer?

- Who executes the software, and which parts?

- Who delegates to whom: user to agent, agent to agent, or user to agent to agent? (corollary, should you onboard agents, should you sell to agents?)

- Who verifies intermediate work, and how do you avoid agents of chaos?

- Who learns: does the environment get better, do agents learn the app, or is learning split across layers?

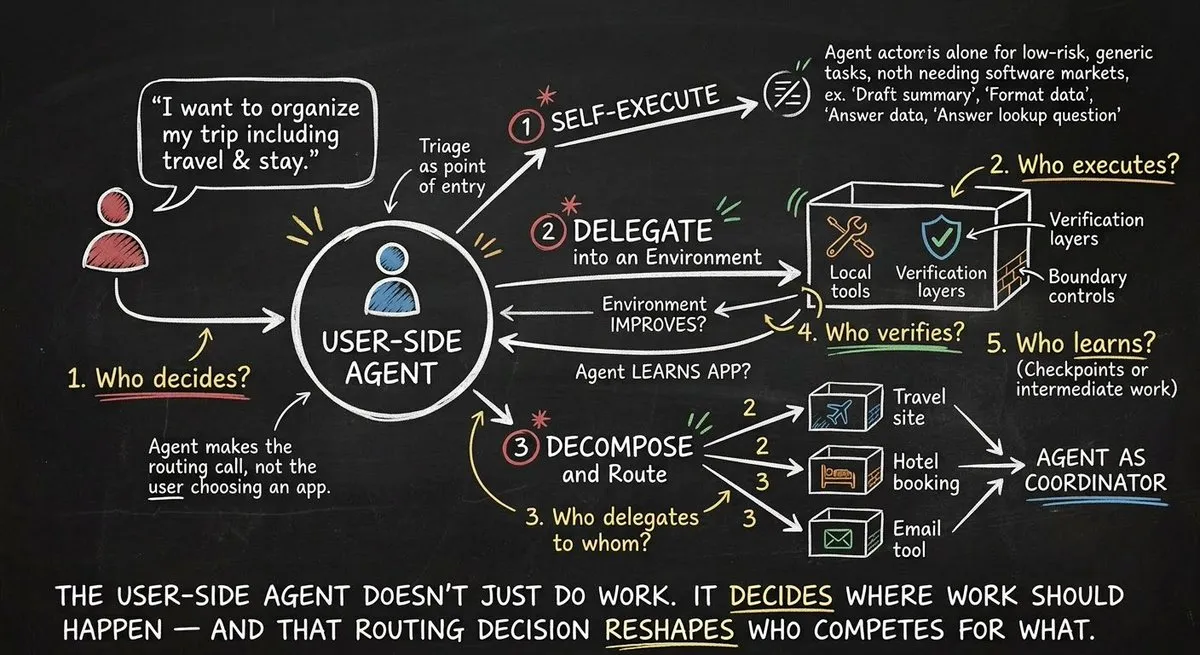

The Routing Decision

Another big question: in an agent-mediated world, the first point of contact for the user is likely not the application. It is the user-side agent. The user states an outcome, and the agent interprets it first. Many user interfaces might get entirely “wrapped” by user-agents.

From that assumption, I think I can imagine a common set of three choices (if we imagine agents as resource-aware and economically rational):

- First, it can execute directly when the task is generic, low risk, and easy to do from the outside.

- Second, it can delegate into an environment when the task benefits from better local context, stronger verifiers, safer action spaces, tighter controls, or more reliable recovery.

- Third, it can break the work apart and route it across several environments when the task spans multiple domains.

That means the user-side agent is not just doing work. It is deciding where work should happen, which environment (tools, feedback, simulation,…) will give it the highest likelihood of succeeding.

Losing the direct relationship with the user, and with it the routing decision that determines your volume, is a seismic shift in demand behavior, and it is bound to reshape the supply side.

That is the market shift I keep circling back to.

Why Delegation Will Happen

Agentic delegation (i.e. an agent renting access to a specialist environment or agent) will not happen because an environment sounds elegant in theory. It will happen because the environment gives (measurably) better odds of success. Better safety. Better recovery. Better verification. Better trust boundaries. Better policies.

In other words, the agent will delegate when another system is clearly a better place to complete the work than the present solution or a custom solution.

This is why I think some classes of work will move toward governed environments, rather than remaining tools or being competed out, as many argue. Specialization still has a place in the equilibrium.

Those environments may work in regulated domains, have access to privileged state, offer bounded action spaces, excel at approval flows, offer complex replay and rollback, include native verifiers, and offer safer execution conditions than what a general-purpose agent can assemble on the fly. Those are practical reasons to delegate.

The New Stack

If this plays out, the stack changes. The user agent receives an intent or target outcome. It decides whether to act directly, delegate, or route across multiple systems. Environments compete to become the best delegation targets. And a new strategic layer appears in the middle: the policy for deciding where work should live.

That may also mean market share starts to look less like software distribution in the old sense, and more like flow in a market. Work moves to where execution is best, trust is highest, cost is lowest, and outcomes are most reliable. In that sense, the software market may start to resemble financial markets: flow goes where execution quality is strongest.

The analogy is useful because it shifts the basis of competition. Products are not only trying to attract users anymore. They are trying to attract routed work. They need to become legible to agents, easy to verify, reliable under repeated use, and consistently better than the outside option.

So the real question is not just whether agents compress parts of SaaS. It is what market forms underneath once agents become the main allocators of work.